Positioning in the research landscape

Automated medical image analysis is increasingly used in clinical practice, particularly for volumetric CT scans and whole-slide images (WSI). For such large and complex data, model predictions must be accurate and interpretable.

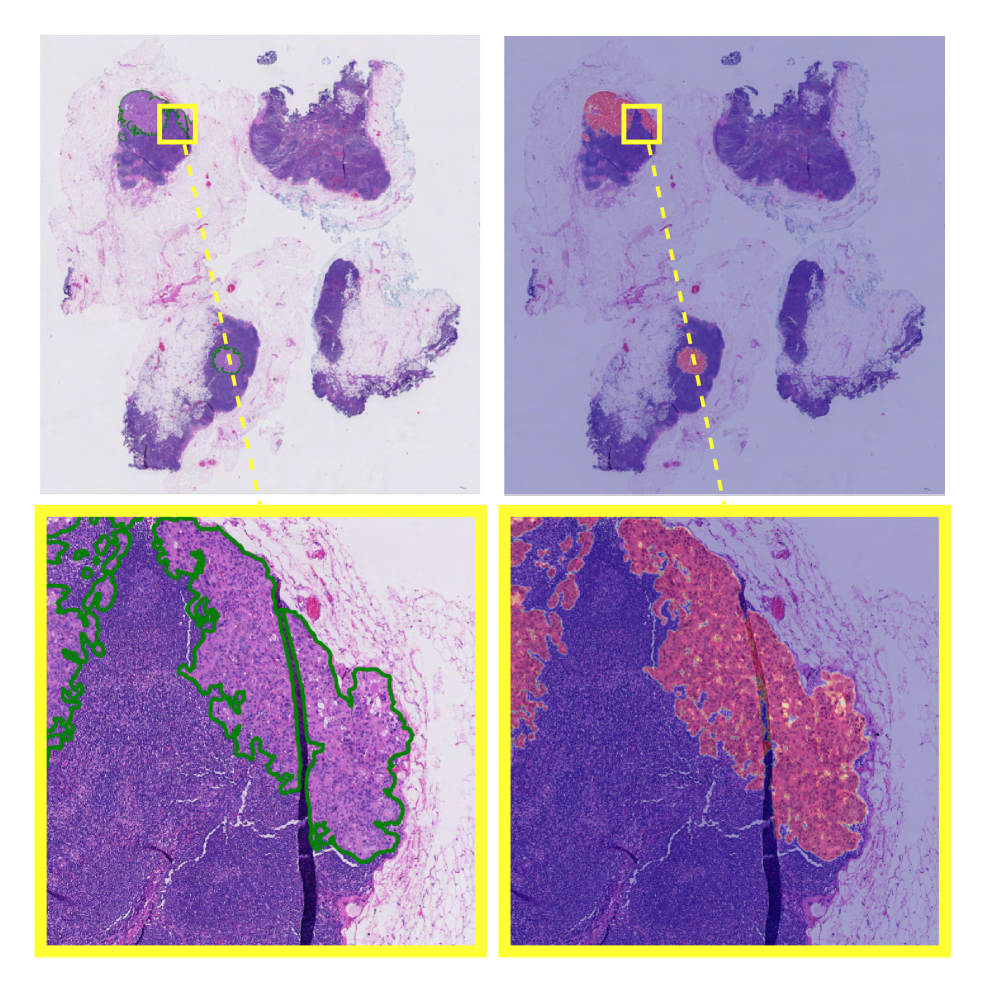

Earlier research published on arXiv explored how explainable weakly supervised methods can incorporate aggregation mechanisms to generate diagnostic heatmaps directly from image-level labels, enabling localization of clinically relevant regions. At a foundational level, this work reflects core computer vision principles in healthcare, where image embedding models are designed to support both accurate classification and pixel-level interpretability for clinical validation.

Why weak supervision matters in clinical imaging

Pixel/voxel masks are expensive to obtain at scale; weak labels (scan-level, slide-level) are far cheaper. INSIGHT shows how image recognition machine learning can reach strong classification while producing weakly labeled semantic segmentation cues (heatmaps) that guide review. Complementary work on weak/semi-supervised medical segmentation (e.g., superpixel propagation, counterfactual inpainting) underscores the same trend: scalable supervision with faithful localization.

Clinical Vision Model Foundations

To implement explainable weakly-supervised medical image models like INSIGHT, which embed interpretability directly into the architecture rather than relying on post-hoc methods, we can break the system down into a set of foundational components that support both accurate classification and built-in localization.

- Encoders. ViT/CNN backbones for deep learning computer vision on 2D/3D inputs, patch/region features allow tiling for WSIs/CT volumes.

- Aggregators. Set/sequence modules (MIL variants or INSIGHT-style) that pool region features while producing internal heatmaps for interpretability.

- Segmentation heads. U-Net/DeepLab family (when dense labels exist) and weakly supervised variants (scribbles, points, slide-level).

- Detection heads. Object detection models for lesions, devices, or specific anatomical findings; semantic segmentation for organs/tumors/hematomas.

- Embeddings & retrieval. Image embedding supports cohort search (find scans “like this”), QA of labeling drift, and case-based reasoning.

These components together drive image recognition AI systems that clinicians can interrogate not just via class scores but also via where the model looked.

Multimodal Vision Pipelines

Clinical workflows mix images with metadata and reports. A reliable machine learning pipeline, therefore:

- Ingest & standardize. De-identification, DICOM harmonization, intensity/spacing normalization.

- Region processing. tile large images (WSI) or crops/slices (CT/MRI); cache features.

- Weakly supervised aggregator. The INSIGHT-style module yields a classification and explanation heatmap.

- Optional dense tasks. When masks exist, train semantic segmentation models; otherwise, convert heatmaps into candidate regions.

- Retrieval & triage. Image embedding model indexes cases; supports classification of files (modality/body-part) and prioritization queues.

- Clinical context fusion. Limited text cues (indication strings, scanner notes) can be added carefully; the image remains the primary signal.

- Human review & reporting. Radiologists/pathologists validate heatmaps, adjust findings, and export structured summaries.

Note on terminology crossover: phrases like AI document processing, automated document processing, intelligent document processing, and data extraction from documents refer to document AI. We mention them only to contrast with imaging AI, where clinical images rarely require OCR, although downstream medical abbreviation handling is employed in text classification of files (e.g., modality tags) and report QC. Terms such as OCR medical abbreviation, vision AI solution, vision artificial, and visual artificial intelligence appear here to clarify vocabulary used across healthcare AI stacks. The clinical-imaging pipeline remains pixel-first.

Anomaly detection in clinical imaging

Anomaly detection is fundamental for safety and QA:

- Acquisition anomalies. Motion artifacts, missing series, and corrupted slices are detected via embedding distance or reconstruction residuals (anomaly detection ML).

- Population OOD. Rare anatomies or non-target devices out-of-distribution screening before inference.

- Model behavior. Heatmap quality checks (e.g., is attention off-organ?) and anomaly detection software alerts for unexpected activation patterns.

These methods reduce silent failures and guide human verification, especially when artificial intelligence is used in healthcare constraints.

Supervised vs unsupervised trade-offs

- Unsupervised/self-supervised. Pretrain encoders on large unlabeled PACS archives; learn stable image embedding spaces for retrieval, triage, and OOD checks.

- Weakly supervised. INSIGHT-style learning from scan/slide labels yields class and localization without dense masks.

- Supervised. When pixel/voxel labels are available, fine-tune semantic segmentation for precise boundaries or object detection models for discrete lesions.

In deployment, teams combine these methods by starting with SSL pretraining, followed by weak-label training, and concluding with targeted supervised refinement. This approach matches realistic data economics and regulatory auditing.

Data operations and labeling

Clinical datasets need PHI-safe processes and precise instructions:

- Data labeling solutions should support scribbles/points for WSSS, inter-rater consensus, and uncertainty tags.

- Training data labeling must record provenance (rater IDs, instructions, scanner metadata).

- When dense labels are infeasible, an image labeling service can curate region proposals from model heatmaps for rapid expert verification (“label-from-explanation”).

Although terms like machine learning development services and machine learning solutions often appear in procurement, the technical requirement remains reproducible datasets, auditable training runs, and versioned models.

Governance, privacy, and scope

In healthcare insurance, clinical imaging AI supports authorization and adjudication when scan-based evidence is required, making auditable model explanations (e.g., coordinates, heatmaps) essential. Imaging AI follows the same governance principles as intelligent document processing, healthcare explanation-first design with mandatory human oversight. Cost and evaluation discussions often frame performance in terms of review time, false-alert cost, and clinical utility under regulatory constraints.

It’s time to work smarter

Got a similar problem? Let’s face it together.

Book a short call to explore how AI can enhance performance, reduce manual effort, and deliver measurable business results.